Solve Differential Equations in Python

source

Differential equations can be solved with different methods in Python. Below are examples that show how to solve differential equations with (1) GEKKO Python, (2) Euler’s method, (3) the ODEINT function from Scipy.Integrate. Additional information is provided on using APM Python for parameter estimation with dynamic models and scale-up to large-scale problems.

1. GEKKO Python

GEKKO Python solves the differential equations with tank overflow conditions. When the first tank overflows, the liquid is lost and does not enter tank 2. The model is composed of variables and equations. The differential variables (h1 and h2) are solved with a mass balance on both tanks.

import matplotlib.pyplot as plt

from gekko import GEKKOm = GEKKO()# integration time points

m.time = np.linspace(0,10)

# constants

c1 = 0.13

c2 = 0.20

Ac = 2 # m^2

# inflow

qin1 = 0.5 # m^3/hr

# variables

h1 = m.Var(value=0,lb=0,ub=1)

h2 = m.Var(value=0,lb=0,ub=1)

overflow1 = m.Var(value=0,lb=0)

overflow2 = m.Var(value=0,lb=0)

# outflow equations

qin2 = m.Intermediate(c1 * h1**0.5)

qout1 = m.Intermediate(qin2 + overflow1)

qout2 = m.Intermediate(c2 * h2**0.5 + overflow2)

# mass balance equations

m.Equation(Ac*h1.dt()==qin1-qout1)

m.Equation(Ac*h2.dt()==qin2-qout2)

# minimize overflow

m.Obj(overflow1+overflow2)

# set options

m.options.IMODE = 6 # dynamic optimization

# simulate differential equations

m.solve()

# plot results

plt.figure(1)

plt.plot(m.time,h1,‘b-‘)

plt.plot(m.time,h2,‘r–‘)

plt.xlabel(‘Time (hrs)’)

plt.ylabel(‘Height (m)’)

plt.legend([‘height 1’,‘height 2’])

plt.show()

2. Discretize with Euler’s Method

Euler’s method is used to solve a set of two differential equations in Excel and Python.

import matplotlib.pyplot as pltdef tank(c1,c2):

Ac = 2 # m^2

qin = 0.5 # m^3/hr

dt = 0.5 # hr

tf = 10.0 # hrh1 = 0

h2 = 0

t = 0

ts = np.empty(21)

h1s = np.empty(21)

h2s = np.empty(21)

i = 0

while t<=10.0:

ts[i] = t

h1s[i] = h1

h2s[i] = h2

qout1 = c1 * pow(h1,0.5)

qout2 = c2 * pow(h2,0.5)

h1 = (qin-qout1)*dt/Ac + h1

if h1>1:

h1 = 1

h2 = (qout1-qout2)*dt/Ac + h2

i = i + 1

t = t + dt

# plot data

plt.figure(1)

plt.plot(ts,h1s)

plt.plot(ts,h2s)

plt.xlabel(“Time (hrs)”)

plt.ylabel(“Height (m)”)

plt.show()

# call function

tank(0.13,0.20)

3. SciPy.Integrate ODEINT Function

See Introduction to Using ODEINT for more information on solving differential equations with SciPy.

import matplotlib.pyplot as plt

from scipy.integrate import odeintdef tank(h,t):

# constants

c1 = 0.13

c2 = 0.20

Ac = 2 # m^2

# inflow

qin = 0.5 # m^3/hr

# outflow

qout1 = c1 * h[0]**0.5

qout2 = c2 * h[1]**0.5

# differential equations

dhdt1 = (qin – qout1) / Ac

dhdt2 = (qout1 – qout2) / Ac

# overflow conditions

if h[0]>=1 and dhdt1>=0:

dhdt1 = 0

if h[1]>=1 and dhdt2>=0:

dhdt2 = 0

dhdt = [dhdt1,dhdt2]

return dhdt# integrate the equations

t = np.linspace(0,10) # times to report solution

h0 = [0,0] # initial conditions for height

y = odeint(tank,h0,t) # integrate

# plot results

plt.figure(1)

plt.plot(t,y[:,0],‘b-‘)

plt.plot(t,y[:,1],‘r–‘)

plt.xlabel(‘Time (hrs)’)

plt.ylabel(‘Height (m)’)

plt.legend([‘h1’,‘h2’])

plt.show()

APM Python DAE Integrator and Optimizer

This tutorial gives step-by-step instructions on how to simulate dynamic systems. Dynamic systems may have differential and algebraic equations (DAEs) or just differential equations (ODEs) that cause a time evolution of the response. Below is an example of solving a first-order decay with the APM solver in Python. The objective is to fit the differential equation solution to data by adjusting unknown parameters until the model and measured values match.

Scale-up for Large Sets of Equations

source

Additional Material

This same example problem is also demonstrated with Spreadsheet Programming and in the Matlab programming language. Another example problem demonstrates how to calculate the concentration of CO gas buildup in a room.

Differential equations in Python

There is often no analytical solution to systems with nonlinear, interacting dynamics. We can, however, examine the dynamics using numerical methods. Consider the predator-prey system of equations, where there are fish (xx) and fishing boats (yy):dxdtdydt=x(2−y−x)=−y(1−1.5x)dxdt=x(2−y−x)dydt=−y(1−1.5x)

We use the built-in SciPy function odeint to solve the system of ordinary differential equations, which relies on lsoda from the FORTRAN library odepack. First, we define a callable function to compute the time derivatives for a given state, indexed by the time period. We also load libraries that we’ll use later to animate the results.

import matplotlib.animation as animation

from scipy.integrate import odeint

from numpy import arange

from pylab import *

def BoatFishSystem(state, t):

fish, boat = state

d_fish = fish * (2 - boat - fish)

d_boat = -boat * (1 - 1.5 * fish)

return [d_fish, d_boat]Then, we define the state-space and intital conditions, so that we can solve the system of linear equations. The result is animated below. (The code for some of the graphical bells and whistles is omitted for the sake of exposition.)

t = arange(0, 20, 0.1)

init_state = [1, 1]

state = odeint(BoatFishSystem, init_state, t)

fig = figure()

xlabel('number of fish')

ylabel('number of boats')

plot(state[:, 0], state[:, 1], 'b-', alpha=0.2)

def animate(i):

plot(state[0:i, 0], state[0:i, 1], 'b-')

ani = animation.FuncAnimation(fig, animate, interval=1)

show()

The red, dashed lines indicate the nullclines, derived from the first-order conditions of the equation system. These lines delineate the phase space of the top graph; and the lines intersect at the equilibrium levels of fish and boats.dxdt=0dydt=0⟹y=2−x⟹x=2/3dxdt=0⟹y=2−xdydt=0⟹x=2/3

It is easy to break this result by messing with the solver parameters or the size of the time steps (relative to the total time), demonstrating the fragility of the result for real-world applications. If, for example, we increase the step size from 0.1 to 5, we lose most of the dynamics that characterize the system. The same goes for fiddling with the iteration parameters of the ODE solver.

Suppose we wanted to figure out the behavior of this system near the equilibrium before going through the numerical estimation. First, we linearize the system near the equilibrium, yielding the Jacobian. Let f(x,y)=dx/dtf(x,y)=dx/dt and g(x,y)=dy/dtg(x,y)=dy/dt, then the nonlinear system can be approximated by the following linear system near the equilibrim (x¯,y¯)(x¯,y¯):f(x,y)g(x,y)≈f(x¯,y¯)+∂f(x¯,y¯)∂x(x−x¯)+∂f(x¯,y¯)∂y(y−y¯)≈g(x¯,y¯)+∂g(x¯,y¯)∂x(x−x¯)+∂g(x¯,y¯)∂y(y−y¯)f(x,y)≈f(x¯,y¯)+∂f(x¯,y¯)∂x(x−x¯)+∂f(x¯,y¯)∂y(y−y¯)g(x,y)≈g(x¯,y¯)+∂g(x¯,y¯)∂x(x−x¯)+∂g(x¯,y¯)∂y(y−y¯)

Noting that f(x¯,y¯)=g(x¯,y¯)=0f(x¯,y¯)=g(x¯,y¯)=0 by definition of the equilibrium, the nonlinear system is approximated by the linear system defined by the Jacobian, evaluated at the equilibrium:⎛⎝⎜∂f∂x∂g∂x∂f∂y∂g∂y⎞⎠⎟=(2−y−2×1.5y−x1−1.5x)=(−232−230)(∂f∂x∂f∂y∂g∂x∂g∂y)=(2−y−2x−x1.5y1−1.5x)=(−23−2320)

Then the eigenvalues λλ are given by:∣∣∣−23−λ2−23−λ∣∣∣=0⟹λ2+23λ+43=0⟹λ=−23±i449−−−√|−23−λ−232−λ|=0⟹λ2+23λ+43=0⟹λ=−23±i449

The real part is negative and there is an imaginary component, such that the system will oscillate around the equilibrium tending inward. This behavior is reflected in the animation. I guess we didn’t really have to go through all this work; but whatever, it’s useful for other problems.

Schaefer model

Bjorndal and Conrad (1987) modelled open-access exploitation of North Sea herring between 1963 – 1977. Their model is similar to the one above, except slightly more complicated. Let fish stock (xx) and fishing effort (yy) be modelled by the following system:dxdtdydt=gx(1−xK)−kxy=kpx−c,dxdt=gx(1−xK)−kxydydt=kpx−c,

where kk is a catchability constant, gg is the intrinsic growth rate of the fish stock, KK is the carrying capacity, pp is the fish price, and cc is the marginal cost of one unit of effort. Then, through the same process as above, we find that the equilibrium point (at the intersection of the nullclines) is:(cpk,gk(1−cpkK))(cpk,gk(1−cpkK))

Using the constants in Bjorndal and Conrad (1987) we model the system similarly:

price = 735

effort_scale = 2e-6

marginal_cost = 556380

carrying_capacity = 3.2e6

intrinsic_growth = 0.08

catchability = marginal_cost / (price * 0.25e6)

def BoatFishSystem(state, t, time_scale=0.1):

stock, effort = state

net_growth = intrinsic_growth * stock * (1 - (stock/carrying_capacity))

harvest = catchability * stock * effort

d_stock = net_growth - harvest

d_effort = effort_scale * (price * catchability * stock - marginal_cost)

return [d_stock * time_scale, d_effort * time_scale]

The Jacobian for this system evaluated at the equilibrium is:(−gcpkKpk−kx0)(−gcpkK−kxpk0)

I don’t want to solve this by hand, so I plug it into Python.

from numpy import linalg

values, vectors = linalg.eig(J_equil)

print values

>>> [-0.003125+41.01820462j -0.003125-41.01820462j]The eigenvalues are λ=−0.0031±41.0182iλ=−0.0031±41.0182i. Once again, the behavior seen in the numerical approximation is confirmed by math. The system oscillates and tends inward.

Shit can get weird. The numerical approximations, while very good in Python, can misrepresent the system of equations, given certain parameters. Specifically, the system is solved through an iterative process of calculating the linear change at each interval, approximating the continuous system. Choosing certain step sizes and tolerances will send Python or Matlab into a tailspin. Although, the checks and balances within odeint are really quite good, such that it’s way easier to break the numerical approximation if you try to write it explicitly in a for-loop.

More by ipython-books.github.io

Simulating an ordinary differential equation with SciPy

This is one of the 100+ free recipes of the IPython Cookbook, Second Edition, by Cyrille Rossant, a guide to numerical computing and data science in the Jupyter Notebook. The ebook and printed book are available for purchase at Packt Publishing.

▶ Text on GitHub with a CC-BY-NC-ND license

▶ Code on GitHub with a MIT license

▶ Go to Chapter 12 : Deterministic Dynamical Systems

▶ Get the Jupyter notebook

Ordinary Differential Equations (ODEs) describe the evolution of a system subject to internal and external dynamics. Specifically, an ODE links a quantity depending on a single independent variable (time, for example) to its derivatives. In addition, the system can be under the influence of external factors. A first-order ODE can typically be written as:y′(t)=f(t,y(t))y′(t)=f(t,y(t))

More generally, an nn-th order ODE involves successive derivatives of yy until the order nn. The ODE is said to be linear or nonlinear depending on whether ff is linear in yy or not.

ODEs naturally appear when the rate of change of a quantity depends on its value. Therefore, ODEs are found in many scientific disciplines such as mechanics (evolution of a body subject to dynamic forces), chemistry (concentration of reacting products), biology (spread of an epidemic), ecology (growth of a population), economics, and finance, among others.

Whereas simple ODEs can be solved analytically, many ODEs require a numerical treatment. In this recipe, we will simulate a simple linear second-order autonomous ODE, describing the evolution of a particle in the air subject to gravity and viscous resistance. Although this equation could be solved analytically, here we will use SciPy to simulate it numerically.

How to do it…

1. Let’s import NumPy, SciPy (the integrate package), and matplotlib:

import numpy as np import scipy.integrate as spi import matplotlib.pyplot as plt %matplotlib inline

2. We define a few parameters appearing in our model:

m = 1. # particle's mass k = 1. # drag coefficient g = 9.81 # gravity acceleration

3. We have two variables: xx and yy (two dimensions). We note u=(x,y)u=(x,y). The ODE that we are going to simulate is:u′′=−kmu′+gu″=−kmu′+g

Here, gg is the gravity acceleration vector.

In order to simulate this second-order ODE with SciPy, we can convert it to a first-order ODE (another option would be to solve u′u′ first before integrating the solution). To do this, we consider two 2D variables: uu and u′u′. We note v=(u,u′)v=(u,u′). We can express v′v′ as a function of vv. Now, we create the initial vector v0v0 at time t=0t=0: it has four components.

# The initial position is (0, 0). v0 = np.zeros(4) # The initial speed vector is oriented # to the top right. v0[2] = 4. v0[3] = 10.

4. Let’s create a Python function ff that takes the current vector v(t0)v(t0) and a time t0t0 as arguments (with optional parameters) and that returns the derivative v′(t0)v′(t0):

def f(v, t0, k):

# v has four components: v=[u, u'].

u, udot = v[:2], v[2:]

# We compute the second derivative u'' of u.

udotdot = -k / m * udot

udotdot[1] -= g

# We return v'=[u', u''].

return np.r_[udot, udotdot]

5. Now, we simulate the system for different values of kk. We use the SciPy odeint() function, defined in the scipy.integrate package.

Starting with SciPy 1.0, the generic

scipy.integrate.solve_ivp()function can be used instead of the old functionodeint():

fig, ax = plt.subplots(1, 1, figsize=(8, 4))

# We want to evaluate the system on 30 linearly

# spaced times between t=0 and t=3.

t = np.linspace(0., 3., 30)

# We simulate the system for different values of k.

for k in np.linspace(0., 1., 5):

# We simulate the system and evaluate $v$ on the

# given times.

v = spi.odeint(f, v0, t, args=(k,))

# We plot the particle's trajectory.

ax.plot(v[:, 0], v[:, 1], 'o-', mew=1, ms=8,

mec='w', label=f'k={k:.1f}')

ax.legend()

ax.set_xlim(0, 12)

ax.set_ylim(0, 6)

In the preceding figure, the most outward trajectory (blue) corresponds to drag-free motion (without air resistance). It is a parabola. In the other trajectories, we can observe the increasing effect of air resistance, parameterized with kk.

How it works…

Let’s explain how we obtained the differential equation from our model. Let u=(x,y)u=(x,y) encode the 2D position of our particle with mass mm. This particle is subject to two forces: gravity mg=(0,−9.81⋅m)mg=(0,−9.81⋅m) and air drag F=−ku′F=−ku′. This last term depends on the particle’s speed and is only valid at low speed. With higher speeds, we need to use more complex nonlinear expressions.

Now, we use Newton’s second law of motion in classical mechanics. This law states that, in an inertial reference frame, the mass multiplied by the acceleration of the particle is equal to the sum of all forces applied to that particle. Here, we obtain:m⋅u′′=F+mgm⋅u″=F+mg

We immediately obtain our second-order ODE:u′′=−kmu′+gu″=−kmu′+g

We transform it into a single-order system of ODEs, with v=(u,u′)v=(u,u′):v′=(u′,u′′)=(u′,−kmu′+g)v′=(u′,u″)=(u′,−kmu′+g)

The last term can be expressed as a function of vv only.

The SciPy odeint() function is a black-box solver; we simply specify the function that describes the system, and SciPy solves it automatically. This function leverages the FORTRAN library ODEPACK, which contains well-tested code that has been used for decades by many scientists and engineers.

The newer solve_ivb() function offers a common API for Python implementations of various ODE solvers.

An example of a simple numerical solver is the Euler method. To numerically solve the autonomous ODE y′=f(y)y′=f(y), the method consists of discretizing time with a time step dtdt and replacing y′y′ with a first-order approximation:y′(t)≃y(t+dt)−y(t)dty′(t)≃y(t+dt)−y(t)dt

Then, starting from an initial condition y0=y(t0)y0=y(t0), the method evaluates yy successively with the following recurrence relation:yn+1=yn+dt⋅f(yn)witht=n⋅dt,yn=y(n⋅dt)yn+1=yn+dt⋅f(yn)witht=n⋅dt,yn=y(n⋅dt)

There’s more…

Here are a few references:

- The documentation of the integrate package in SciPy available at http://docs.scipy.org/doc/scipy/reference/integrate.html

- The new

solve_ivp()function, available in SciPy 1.0 and later, at https://docs.scipy.org/doc/scipy/reference/generated/scipy.integrate.solve_ivp.html - ODEs on Wikipedia, available at https://en.wikipedia.org/wiki/Ordinary_differential_equation

- ODEs lectures on Awesome Math, at https://github.com/rossant/awesome-math/#ordinary-differential-equations

- Newton’s laws of motion on Wikipedia, available at https://en.wikipedia.org/wiki/Newton‘s_laws_of_motion

- Air resistance on Wikipedia, available at https://en.wikipedia.org/wiki/Drag_%28physics%29

- Some numerical methods for ODEs described at https://en.wikipedia.org/wiki/Numerical_methods_for_ordinary_differential_equations

- The Euler method on Wikipedia, available at https://en.wikipedia.org/wiki/Euler_method

- Documentation of the ODEPACK package in FORTRAN available at http://www.netlib.org/odepack/opks-sum

This chapter is taken from the book A Primer on Scientific Programming with Python by H. P. Langtangen, 5th edition, Springer, 2016.

Scalar ordinary differential equations

We shall in this document work with ordinary differential equations (ODEs) written on the abstract formu′(t)=f(u(t),t).(1)(1)u′(t)=f(u(t),t).There is an infinite number of solutions to such an equation, so to make the solution u(t)u(t) unique, we must also specify an initial conditionu(0)=U0.(2)(2)u(0)=U0.Given f(u,t)f(u,t) and U0U0, our task is to compute u(t)u(t).

At first sight, (1) is only a first-order differential equation, since only u′u′ and not higher-order derivatives like u′u′ are present in the equation. However, equations with higher-order derivatives can also be written on the abstract form (1) by introducing auxiliary variables and interpreting uu and ff as vector functions. This rewrite of the original equation leads to a system of first-order differential equations and will be treated in the section Systems of ordinary differential equations. The bottom line is that a very large family of differential equations can be written as (1). Forthcoming examples will provide evidence.

We shall first assume that u(t)u(t) is a scalar function, meaning that it has one number as value, which can be represented as afloat object in Python. We then refer to (1) as a scalar differential equation. The counterpart vector function means that uu is a vector of scalar functions and the equation is known as a system of ODEs (also known as a vector ODE). The value of a vector function is a list or array in a program. Systems of ODEs are treated in the section Systems of ordinary differential equations.

Examples on right-hand-side functions

To write a specific differential equation on the form (1) we need to identify what the ff function is. Say the equation readsy2y′=x,y(0)=Y,y2y′=x,y(0)=Y,with y(x)y(x) as the unknown function. First, we need to introduce uu and tt as new symbols: u=yu=y, t=xt=x. This gives the equivalent equation u2u′=tu2u′=t and the initial condition u(0)=Yu(0)=Y. Second, the quantity u′u′ must be isolated on the left-hand side of the equation in order to bring the equation on the form (1). Dividing by u2u2 givesu′=tu−2.u′=tu−2.This fits the form (1), and the f(u,t)f(u,t) function is simply the formula involving uu and tt on the right-hand side:f(u,t)=tu−2.f(u,t)=tu−2.The tt parameter is very often absent on the right-hand side such that ff involves uu only.

Let us list some common scalar differential equations and their corresponding ff functions. Exponential growth of money or populations is governed byu′=αu,(3)(3)u′=αu,where α>0α>0 is a given constant expressing the growth rate of uu. In this case,f(u,t)=αu.(4)(4)f(u,t)=αu.A related model is the logistic ODE for growth of a population under limited resources:u′=αu(1−uR),(5)(5)u′=αu(1−uR),where α>0α>0 is the initial growth rate and RR is the maximum possible value of uu. The corresponding ff isf(u,t)=αu(1−uR).(6)(6)f(u,t)=αu(1−uR).Radioactive decay of a substance has the modelu′=−au,(7)(7)u′=−au,where a>0a>0 is the rate of decay of uu. Here,f(u,t)=−au.(8)(8)f(u,t)=−au.A body falling in a fluid can be modeled byu′+b|u|u=g,(9)(9)u′+b|u|u=g,where b>0b>0 models the fluid resistance, gg is the acceleration of gravity, and uu is the body’s velocity (see Exercise 8: Simulate a falling or rising body in a fluid). By solving for u′u′ we findf(u,t)=−b|u|u+g.(10)(10)f(u,t)=−b|u|u+g.Finally, Newton’s law of cooling is the ODEu′=−h(u−s),(11)(11)u′=−h(u−s),where uu is the temperature of a body, h>0h>0 is a proportionality constant, normally to be estimated from experiments, and ss is the temperature of the surroundings. Obviously,f(u,t)=−h(u−s).(12)(12)f(u,t)=−h(u−s).

The Forward Euler scheme

Our task now is to define numerical methods for solving equations of the form (1). The simplest such method is the Forward Euler scheme. Equation (1) is to be solved for t∈(0,T]t∈(0,T], and we seek the solution uu at discrete time points ti=iΔtti=iΔt, i=1,2,…,ni=1,2,…,n. Clearly, tn=nΔt=Ttn=nΔt=T, determining the number of points nn as T/ΔtT/Δt. The corresponding values u(ti)u(ti) are often abbreviated as uiui, just for notational simplicity.

Equation (1) is to be fulfilled at all time points t∈(0,T]t∈(0,T]. However, when we solve (1) numerically, we only require the equation to be satisfied at the discrete time points t1,t2,…,tnt1,t2,…,tn. That is,u′(tk)=f(u(tk),tk),u′(tk)=f(u(tk),tk),for k=1,…,nk=1,…,n. The fundamental idea of the Forward Euler scheme is to approximate u′(tk)u′(tk) by a one-sided, forward difference:u′(tk)≈u(tk+1)−u(tk)Δt=uk+1−ukΔt.u′(tk)≈u(tk+1)−u(tk)Δt=uk+1−ukΔt.This removes the derivative and leaves us with the equationuk+1−ukΔt=f(uk,tk).uk+1−ukΔt=f(uk,tk).We assume that ukuk is already computed, so that the only unknown in this equation is uk+1uk+1, which we can solve for:uk+1=uk+Δtf(uk,tk).(13)(13)uk+1=uk+Δtf(uk,tk).This is the Forward Euler scheme for a scalar first-order differential equation u′=f(u,t)u′=f(u,t).

Equation (13) has a recursive nature. We start with the initial condition, u0=U0u0=U0, and compute u1u1 asu1=u0+Δtf(u0,t0).u1=u0+Δtf(u0,t0).Then we can continue withu2=u1+Δtf(u1,t1),u2=u1+Δtf(u1,t1),and then with u3u3 and so forth. This recursive nature of the method also demonstrates that we must have an initial condition – otherwise the method cannot start.

Function implementation

The next task is to write a general piece of code that implements the Forward Euler scheme (13). The complete original (continuous) mathematical problem is stated asu′=f(u,t), t∈(0,T],u(0)=U0,(14)(14)u′=f(u,t), t∈(0,T],u(0)=U0,while the discrete numerical problem readsu0uk+1=U0,=uk+Δtf(uk,tk), tk=kΔt,k=1,…,n, n=T/Δt.(15)(16)(15)u0=U0,(16)uk+1=uk+Δtf(uk,tk), tk=kΔt,k=1,…,n, n=T/Δt.We see that the input data to the numerical problem consist of ff, U0U0, TT, and ΔtΔt or nn. The output consists of u1,u2,…,unu1,u2,…,un and the corresponding set of time points t1,t2,…,tnt1,t2,…,tn.

Let us implement the Forward Euler method in a function ForwardEuler that takes ff, U0U0, TT, and nn as input, and that returns u0,…,unu0,…,un and t0,…,tnt0,…,tn:

import numpy as np

def ForwardEuler(f, U0, T, n):

"""Solve u'=f(u,t), u(0)=U0, with n steps until t=T."""

t = np.zeros(n+1)

u = np.zeros(n+1) # u[k] is the solution at time t[k]

u[0] = U0

t[0] = 0

dt = T/float(n)

for k in range(n):

t[k+1] = t[k] + dt

u[k+1] = u[k] + dt*f(u[k], t[k])

return u, t

Note the close correspondence between the implementation and the mathematical specification of the problem to be solved. The argument f to the ForwardEuler function must be a Python function f(u, t) implementing the f(u,t)f(u,t) function in the differential equation. In fact, f is the definition of the equation to be solved. For example, we may solve u′=uu′=u for t∈(0,3)t∈(0,3), with u(0)=1u(0)=1, and Δt=0.1Δt=0.1 by the following code utilizing the ForwardEuler function:

def f(u, t):

return u

u, t = ForwardEuler(f, U0=1, T=4, n=20)

With the u and t arrays we can easily plot the solution or perform data analysis on the numbers.

Verifying the implementation

Visual comparison

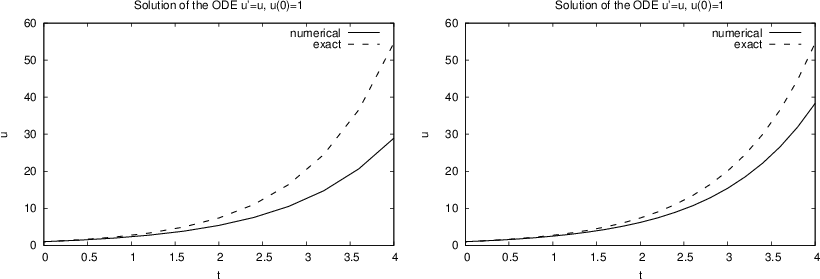

Many computational scientists and engineers look at a plot to see if a numerical and exact solution are sufficiently close, and if so, they conclude that the program works. This is, however, not a very reliable test. Consider a first try at running ForwardEuler(f, U0=1, T=4, n=10), which gives the plot to the left in Figure 1. The discrepancy between the solutions is large, and the viewer may be uncertain whether the program works correctly or not. Running n=20 should give a better solution, depicted to the right in Figure 1, but is the improvement good enough? Increasing n drives the numerical curve closer to the exact one. This brings evidence that the program is correct, but there could potentially be errors in the code that makes the curves further apart than what is implied by the numerical approximations alone. We cannot know if such a problem exists.

Figure 1: Comparison of numerical exact solution for 10 intervals (left) and 20 (intervals).

Comparing with hand calculations

A more rigorous way of verifying the implementation builds on a simple principle: we run the algorithm by hand a few times and compare the results with those in the program. For most practical purposes, it suffices to compute u1u1 and u2u2 by hand:u1=1+0.1⋅1=1.1,u2=1.1+0.1⋅1.1=1.21.u1=1+0.1⋅1=1.1,u2=1.1+0.1⋅1.1=1.21.These values are to be compared with the numbers produced by the code. A correct program will lead to deviations that are zero (to machine precision). Any such test should be wrapped in a proper test function such that it can easily be repeated later. Here, it means we make a function

def test_ForwardEuler_against_hand_calculations():

"""Verify ForwardEuler against hand calc. for 3 time steps."""

u, t = ForwardEuler(f, U0=1, T=0.2, n=2)

exact = np.array([1, 1.1, 1.21]) # hand calculations

error = np.abs(exact - u).max()

success = error < 1E-14

assert success, '|exact - u| = %g != 0' % error

The test function is written in a way that makes it trivial to integrate it in the nose testing framework. This means that the name starts with test_, there are no function arguments, and the check for passing the test is done with assert success. The test fails if the boolean variable success is False. The string after assert success is a message that will be written out if the test fails. The error measure is most conveniently a scalar number, which here is taken as the absolute value of the largest deviation between the exact and the numerical solution. Although we expect the error measure to be zero, we are prepared for rounding errors and must use a tolerance when testing if the test has passed.

Comparing with an exact numerical solution

Another effective way to verify the code, is to find a problem that can be solved exactly by the numerical method we use. That is, we seek a problem where we do not have to deal with numerical approximation errors when comparing the exact solution with the one produced by the program. It turns out that if the solution u(t)u(t) is linear in tt, the Forward Euler method will reproduce this solution exactly. Therefore, we choose u(t)=at+U0u(t)=at+U0, with (e.g.) a=0.2a=0.2 and U0=3U0=3. The corresponding ff is the derivative of uu, i.e., f(u,t)=af(u,t)=a. This is obviously a very simple right-hand side without any uu or tt. However, we can make ff more complicated by adding something that is zero, e.g., some expression with u−(at+U0)u−(at+U0), say (u−(at+U0))4(u−(at+U0))4, so thatf(u,t)=a+(u−(at+U0))4.(17)(17)f(u,t)=a+(u−(at+U0))4.

We implement our special ff and the exact solution in two functions f and u_exact, and compute a scalar measure of the error. As a above, we place the test inside a test function and make an assertion that the error is sufficiently close to zero:

def test_ForwardEuler_against_linear_solution():

"""Use knowledge of an exact numerical solution for testing."""

def f(u, t):

return 0.2 + (u - u_exact(t))**4

def u_exact(t):

return 0.2*t + 3

u, t = ForwardEuler(f, U0=u_exact(0), T=3, n=5)

u_e = u_exact(t)

error = np.abs(u_e - u).max()

success = error < 1E-14

assert success, '|exact - u| = %g != 0' % error

A “silent” execution of the function indicates that the test works.

The shown functions are collected in a file ForwardEuler_func.py.

From discrete to continuous solution

The numerical solution of an ODE is a discrete function in the sense that we only know the function values u0,u1,ldots,uNu0,u1,ldots,uN at some discrete points t0,t1,…,tNt0,t1,…,tN in time. What if we want to know uu between two computed points? For example, what is uubetween titi and ti+1ti+1, say at the midpoint t=ti+12Δtt=ti+12Δt? One can use interpolation techniques to find this value uu. The simplest interpolation technique is to assume that uu varies linearly on each time interval. On the interval [ti,ti+1][ti,ti+1] the linear variation of uubecomesu(t)=ui+ui+1−uiti+1−ti(t−ti).u(t)=ui+ui+1−uiti+1−ti(t−ti).We can then evaluate, e.g., u(ti+12Δt)u(ti+12Δt) from this formula and show that it becomes (ui+ui+1)/2(ui+ui+1)/2.

The function scitools.std.wrap2callable can automatically convert a discrete function to a continuous function:

from scitools.std import wrap2callable u_cont = wrap2callable((t, u))

From the arrays t and u, wrap2callable constructs a continuous function based on linear interpolation. The result u_cont is a Python function that we can evaluate for any value of its argument t:

dt = t[i+1] - t[i] t = t[i] + 0.5*dt value = u_cont(t)

In general, the wrap2callable function is handy when you have computed some discrete function and you want to evaluate this discrete function at any point.

Switching numerical method

There are numerous alternative numerical methods for solving (13). One of the simplest is Heun’s method:u∗uk+1=uk+Δtf(uk,tk),=uk+12Δtf(uk,tk)+12Δtf(u∗,tk+1).(18)(19)(18)u∗=uk+Δtf(uk,tk),(19)uk+1=uk+12Δtf(uk,tk)+12Δtf(u∗,tk+1).This scheme is easily implemented in the ForwardEuler function by replacing the Forward Euler formula

u[k+1] = u[k] + dt*f(u[k], t[k])

u_star = u[k] + dt*f(u[k], t[k])

u[k+1] = u[k] + 0.5*dt*f(u[k], t[k]) + 0.5*dt*f(u_star, t[k+1])

We can, especially if f is expensive to calculate, eliminate a call f(u[k], t[k]) by introducing an auxiliary variable:

f_k = f(u[k], t[k])

u_star = u[k] + dt*f_k

u[k+1] = u[k] + 0.5*dt*f_k + 0.5*dt*f(u_star, t[k+1])

Class implementation

As an alternative to the general ForwardEuler function in the section Function implementation, we shall now implement the numerical method in a class. This requires, of course, familiarity with the class concept in Python.

Class wrapping of a function

Let us start with simply wrapping the ForwardEuler function in a class ForwardEuler_v1 (the postfix _v1 indicates that this is the very first class version). That is, we take the code in the ForwardEuler function and distribute it among methods in a class.

The constructor can store the input data of the problem and initialize data structures, while a solve method can perform the time stepping procedure:

import numpy as np

class ForwardEuler_v1(object):

def __init__(self, f, U0, T, n):

self.f, self.U0, self.T, self.n = f, dt, U0, T, n

self.dt = T/float(n)

self.u = np.zeros(n+1)

self.t = np.zeros(n+1)

def solve(self):

"""Compute solution for 0 <= t <= T."""

self.u[0] = float(self.U0)

self.t[0] = float(0)

for k in range(self.n):

self.k = k

self.t[k+1] = self.t[k] + self.dt

self.u[k+1] = self.advance()

return self.u, self.t

def advance(self):

"""Advance the solution one time step."""

u, dt, f, k, t = \

self.u, self.dt, self.f, self.k, self.t

u_new = u[k] + dt*f(u[k], t[k])

return u_new

Note that we have introduced a third class method, advance, which isolates the numerical scheme. The motivation is that, by observation, the constructor and the solve method are completely general as they remain unaltered if we change the numerical method (at least this is true for a wide class of numerical methods). The only difference between various numerical schemes is the updating formula. It is therefore a good programming habit to isolate the updating formula so that another scheme can be implemented by just replacing the advance method – without touching any other parts of the class.

Also note that we in the advance method “strip off” the self prefix by introducing local symbols with exactly the same names as in the mathematical specification of the numerical method. This is important if we want a visually one-to-one correspondence between the mathematics and the computer code.

Application of the class goes as follows, here for the model problem u′=uu′=u, u(0)=1u(0)=1:

def f(u, t):

return u

solver = ForwardEuler_v1(f, U0=1, T=3, n=15)

u, t = solver.solve()

Switching numerical method

Implementing, for example, Heun’s method (18)–(19) is a matter of replacing the advance method by

def advance(self):

"""Advance the solution one time step."""

u, dt, f, k, t = \

self.u, self.dt, self.f, self.k, self.t

u_star = u[k] + dt*f(u[k], t[k])

u_new = u[k] + \

0.5*dt*f(u[k], t[k]) + 0.5*dt*f(u_star, t[k+1])

return u_new

Checking input data is always a good habit, and in the present class the constructor may test that the f argument is indeed an object that can be called as a function:

if not callable(f):

raise TypeError('f is %s, not a function' % type(f))

Any function f or any instance of a class with a __call__ method will make callable(f) evaluate to True.

A more flexible class

Say we solve u′=f(u,t)u′=f(u,t) from t=0t=0 to t=T1t=T1. We can continue the solution for t>T1t>T1 simply by restarting the whole procedure with initial conditions at t=T1t=T1. Hence, the implementation should allow several consequtive solve steps.

Another fact is that the time step ΔtΔt does not need to be constant. Allowing small ΔtΔt in regions where uu changes rapidly and letting ΔtΔt be larger in areas where uu is slowly varying, is an attractive solution strategy. The Forward Euler method can be reformulated for a variable time step size tk+1−tktk+1−tk:uk+1=uk+(tk+1−tk)f(uk,tk).(20)(20)uk+1=uk+(tk+1−tk)f(uk,tk).Similarly, Heun’s method and many other methods can be formulated with a variable step size simply by replacing ΔtΔt with tk+1−tktk+1−tk. It then makes sense for the user to provide a list or array with time points for which a solution is sought: t0,t1,…,tnt0,t1,…,tn. The solve method can accept such a set of points.

The mentioned extensions lead to a modified class:

class ForwardEuler(object):

def __init__(self, f):

if not callable(f):

raise TypeError('f is %s, not a function' % type(f))

self.f = f

def set_initial_condition(self, U0):

self.U0 = float(U0)

def solve(self, time_points):

"""Compute u for t values in time_points list."""

self.t = np.asarray(time_points)

self.u = np.zeros(len(time_points))

# Assume self.t[0] corresponds to self.U0

self.u[0] = self.U0

for k in range(len(self.t)-1):

self.k = k

self.u[k+1] = self.advance()

return self.u, self.t

def advance(self):

"""Advance the solution one time step."""

u, f, k, t = self.u, self.f, self.k, self.t

dt = t[k+1] - t[k]

u_new = u[k] + dt*f(u[k], t[k])

return u_new

Usage of the class

We must instantiate an instance, call the set_initial_condition method, and then call the solve method with a list or array of the time points we want to compute uu at:

def f(u, t):

"""Right-hand side function for the ODE u' = u."""

return u

solver = ForwardEuler(f)

solver.set_initial_condition(2.5)

u, t = solver.solve(np.linspace(0, 4, 21))

A simple plot(t, u) command can visualize the solution.

Verification

It is natural to perform the same verifications as we did for the ForwardEuler function in the section Verifying the implementation. First, we test the numerical solution against hand calculations. The implementation makes use of the same test function, just the way of calling up the numerical solver is different:

def test_ForwardEuler_against_hand_calculations():

"""Verify ForwardEuler against hand calc. for 2 time steps."""

solver = ForwardEuler(lambda u, t: u)

solver.set_initial_condition(1)

u, t = solver.solve([0, 0.1, 0.2])

exact = np.array([1, 1,1, 1.21]) # hand calculations

error = np.abs(exact - u).max()

assert error < 1E-14, '|exact - u| = %g != 0' % error

We have put some efforts into making this test very compact, mainly to demonstrate how Python allows very short, but still readable code. With a lambda function we can define the right-hand side of the ODE directly in the constructor argument. The solve method accepts a list, tuple, or array of time points and turns the data into an array anyway. Instead of a separate boolean variable success we have inserted the test inequality directly in the assert statement.

The second verification method applies the fact that the Forward Euler scheme is exact for a uu that is linear in tt. We perform a slightly more complicated test than in the section Verifying the implementation: now we first solve for the points 0,0.4,1,1.20,0.4,1,1.2, and then we continue the solution process for t1=1.4t1=1.4 and t2=1.5t2=1.5.

def test_ForwardEuler_against_linear_solution():

"""Use knowledge of an exact numerical solution for testing."""

u_exact = lambda t: 0.2*t + 3

solver = ForwardEuler(lambda u, t: 0.2 + (u - u_exact(t))**4)

# Solve for first time interval [0, 1.2]

solver.set_initial_condition(u_exact(0))

u1, t1 = solver.solve([0, 0.4, 1, 1.2])

# Continue with a new time interval [1.2, 1.5]

solver.set_initial_condition(u1[-1])

u2, t2 = solver.solve([1.2, 1.4, 1.5])

# Append u2 to u1 and t2 to t1

u = np.concatenate((u1, u2))

t = np.concatenate((t1, t2))

u_e = u_exact(t)

error = np.abs(u_e - u).max()

assert error < 1E-14, '|exact - u| = %g != 0' % error

Making a module

It is a well-established programming habit to have class implementations in files that act as Python modules. This means that all code is collected within classes or functions, and that the main program is executed in a test block. Upon import, no test or demonstration code should be executed.

Everything we have made so far is in classes or functions, so the remaining task to make a module, is to construct the test block.

if __name__ == '__main__':

import sys

if len(sys.argv) >= 2 and sys.argv[1] == 'test':

test_ForwardEuler_v1_against_hand_calculations()

test_ForwardEuler_against_hand_calculations()

test_ForwardEuler_against_linear_solution()

The ForwardEuler_func.py file with functions from the sections Function implementation and Verifying the implementation is in theory a module, but not sufficiently cleaned up. Exercise 15: Clean up a file to make it a module encourages you to turn the file into a proper module.

Remark

We do not need to call the test functions from the test block, since we can let nose run the tests automatically, by nosetests -s ForwardEuler.py.

Logistic growth via a function-based approach

A more exciting application than the verification problems above is to simulate logistic growth of a population. The relevant ODE readsu′(t)=αu(t)(1−u(t)R).u′(t)=αu(t)(1−u(t)R).The mathematical f(u,t)f(u,t) function is simply the right-hand side of this ODE. The corresponding Python function is

def f(u, t):

return alpha*u*(1 - u/R)

where alpha and R are global variables that correspond to αα and RR. These must be initialized before calling the ForwardEulerfunction (which will call the f(u,t) above):

alpha = 0.2 R = 1.0 from ForwardEuler_func2 import ForwardEuler u, t = ForwardEuler(f, U0=0.1, T=40, n=400)

We have in this program assumed that Exercise 15: Clean up a file to make it a module has been carried out to clean up the ForwardEuler_func.py file such that it becomes a proper module file with the name ForwardEuler_func2.py.

With u and t computed we can proceed with visualizing the solution (see Figure 2):

from matplotlib.pyplot import *

plot(t, u)

xlabel('t'); ylabel('u')

title('Logistic growth: alpha=%s, R=%g, dt=%g' %

(alpha, R, t[1]-t[0]))

savefig('tmp.pdf'); savefig('tmp.png')

show()

The complete code appears in the file logistic_func.py.

Figure 2: Plot of the solution of the ODE problem u′=0.2u(1−u)u′=0.2u(1−u), u(0)=0.1u(0)=0.1.

Logistic growth via a class-based approach

The task of this section is to redo the implementation of the section Logistic growth via a function-based approach using a problem class to store the physical parameters and the f(u,t)f(u,t) function, and using class ForwardEuler from the section Class implementation to solve the ODE. Comparison with the code in the section Logistic growth via a function-based approach will then exemplify what the difference between a function-based and a class-based implementation is. There will be two major differences. One is related to technical differences between programming with functions and programming with classes. The other is psychological: when doing class programming one often puts more efforts into making more functions, a complete module, a user interface, more testing, etc. A function-based approach, and in particular the present “flat” MATLAB-style program, tends to be more ad hoc and contain less general, reusable code. At least this is the author’s experience over many years when observing students and professionals create different style of code with different type of programming techniques.

The style adopted for this class-based example have several important ingredients motivated by professional programming habits:

- Modules are imported as

import moduleand calls to functions in the module are therefore prefixed with the module name such that we can easily see where different functionality comes from. - All information about the original ODE problem is collected in a class.

- Physical and numerical parameters can be set on the command line.

- The main program is collected in a function.

- The implementation takes the form of a module such that other programs can reuse the class for representing data in a logistic problem.

The problem class

Class Logistic holds the parameters of the ODE problem: U0U0, αα, RR, and TT as well as the f(u,t)f(u,t) function. Whether TT should be a member of class Logistic or not is a matter of taste, but the appropriate size of TT is strongly linked to the other parameters so it is natural to specify them together. The number of time intervals, nn, used in the numerical solution method is not a part of class Logistic since it influences the accuracy of the solution, but not the qualitative properties of the solution curve as the other parameters do.

The f(u,t)f(u,t) function is naturally implemented as a __call__ method such that the problem instance can act as both an instance and a callable function at the same time. In addition, we include a __str__ for printing out the ODE problem. The complete code for the class looks like

class Logistic(object):

"""Problem class for a logistic ODE."""

def __init__(self, alpha, R, U0, T):

self.alpha, self.R, self.U0, self.T = alpha, float(R), U0, T

def __call__(self, u, t):

"""Return f(u,t) for the logistic ODE."""

return self.alpha*u*(1 - u/self.R)

def __str__(self):

"""Return ODE and initial condition."""

return "u'(t) = %g*u*(1 - u/%g), t in [0, %g]\nu(0)=%g" % \

(self.alpha, self.R, self.T, self.U0)

Getting input from the command line

We decide to specify αα, RR, U0U0, TT, and nn, in that order, on the command line. A function for converting the command-line arguments into proper Python objects can be

def get_input():

"""Read alpha, R, U0, T, and n from the command line."""

try:

alpha = float(sys.argv[1])

R = float(sys.argv[2])

U0 = float(sys.argv[3])

T = float(sys.argv[4])

n = float(sys.argv[5])

except IndexError:

print 'Usage: %s alpha R U0 T n' % sys.argv[0]

sys.exit(1)

return alpha, R, U0, T, n

We have used a standard a try-except block to handle potential errors because of missing command-line arguments. A more user-friendly alternative would be to allow option-value pairs such that, e.g., TT can be set by --T 40 on the command line, but this requires more programming (with the argparse module).

Import statements

The import statements necessary for the problem solving process are written as

import ForwardEuler import numpy as np import matplotlib.pyplot as plt

The two latter statements with their abbreviations have evolved as a standard in Python code for scientific computing.

Solving the problem

The remaining necessary statements for solving a logistic problem are collected in a function

def logistic():

alpha, R, U0, T, n = get_input()

problem = Logistic(alpha=alpha, R=R, U0=U0)

solver = ForwardEuler.ForwardEuler(problem)

solver.set_initial_condition(problem.U0)

time_points = np.linspace(0, T, n+1)

u, t = solver.solve(time_points)

plt.plot(t, u)

plt.xlabel('t'); plt.ylabel('u')

plt.title('Logistic growth: alpha=%s, R=%g, dt=%g'

% (problem.alpha, problem.R, t[1]-t[0]))

plt.savefig('tmp.pdf'); plt.savefig('tmp.png')

plt.show()

Making a module

Everything we have created is either a class or a function. The only remaining task to ensure that the file is a proper module is to place the call to the “main” function logistic in a test block:

if __name__ == '__main__':

logistic()

The complete module is called logistic_class.py.

Pros and cons of the class-based approach

If we quickly need to solve an ODE problem, it is tempting and efficient to go for the function-based code, because it is more direct and much shorter. A class-based module, with a user interface and often also test functions, usually gives more high-quality code that pays off when the software is expected to have a longer life time and will be extended to more complicated problems.

A pragmatic approach is to first make a quick function-based code, but refactor that code to a more reusable and extensible class version with test functions when you experience that the code frequently undergo changes. The present simple logistic ODE problem is, in my honest opinion, not complicated enough to defend a class version for practical purposes, but the primary goal here was to use a very simple mathematical problem for illustrating class programming.